April 29, 2026 · Valentín Stancu

How to build a rival with persistent memory using Gemini

Technical devlog on how Mentium stores and uses player history so your rival actually remembers. Architecture, prompts, costs and trade-offs.

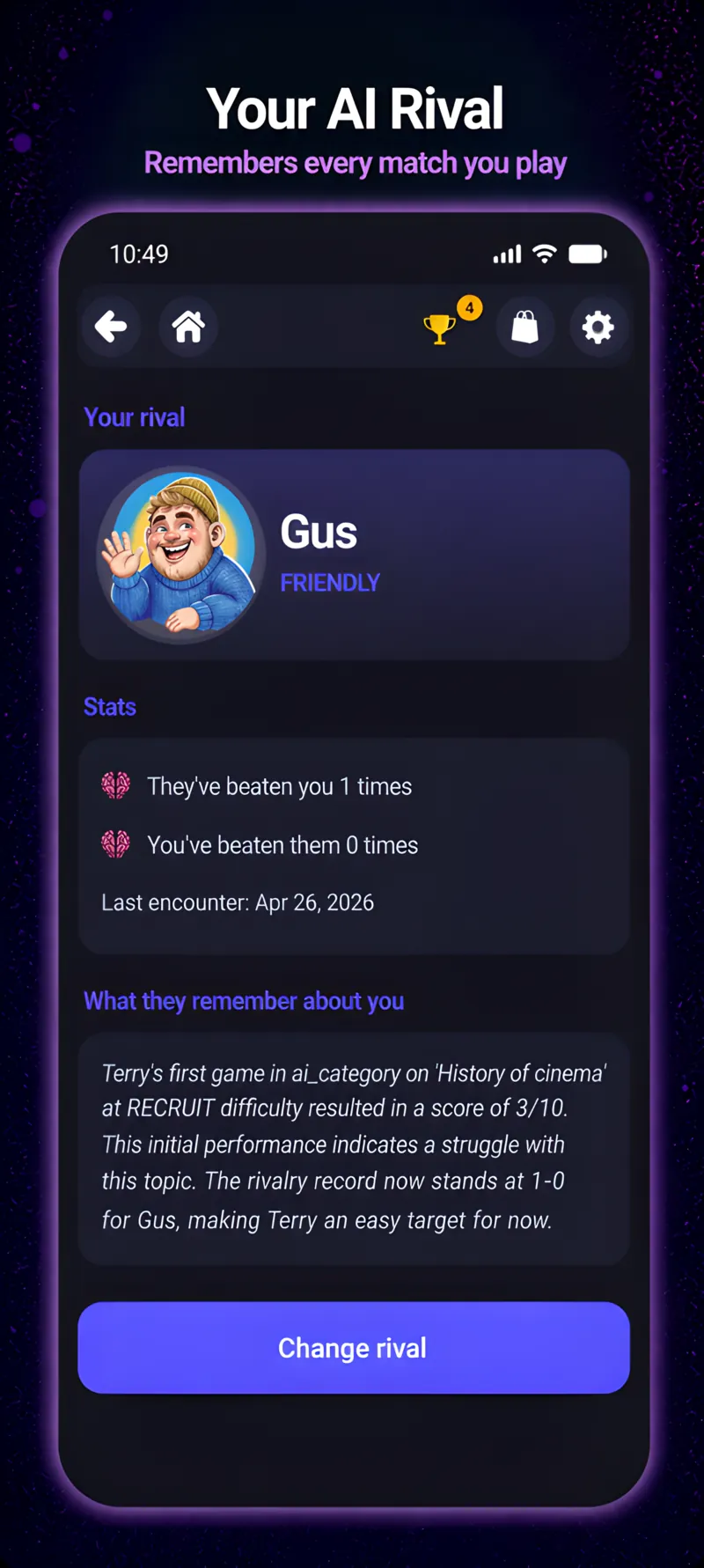

One of the Mentium features that took me longest to design — and the one that surprises beta players most — is the rival with persistent memory. Your rival isn’t a generic NPC spitting random lines. They remember how often you’ve beaten them, the topics you’re strong in, and where you slip. Their pre-match and post-match comments are unique to you.

Here’s how it’s built. Without prompt details (those are secret sauce), but with the architecture, costs and real trade-offs.

The problem

If you call Gemini with “write a post-match comment for a trivia rival”, you get something generic:

“Good game! On to the next one.”

Useless. Any game can do that with random.choice(["Nice!", "Keep going!"]). The interesting version is:

“You’ve beaten me 4 times this week, but all in mythology. Today I caught you on African geography — 4 misses out of 8. Coming prepared tomorrow, right?”

That sentence requires player context. That’s the challenge.

The architecture, step by step

1. Rival state, persisted in Firestore

Every player has a users/{uid}/rival_state document storing:

personality: which of the 5 rivals they were assigned (Taunter, Sage, Underdog, Friendly, Calculator). Assigned on the 3rd match based on early play patterns.head_to_head: how many matches against the rival, wins/losses/draws, current streak.topic_stats: per category, player accuracy in matches vs the rival.last_5_summaries: condensed summaries of the last 5 matches (≤200 chars each) generated when each match closes.last_seen_ms: timestamp of last interaction to avoid weird “yesterday” references when they haven’t opened in 3 weeks.

Total: ~2 KB per player. Negligible storage-wise.

2. Post-match summary (not full history)

The obvious mistake would be sending the full history to Gemini every match. I don’t. Three reasons:

- Tokens: at scale, long prompts multiplied by users and matches saturate the model.

- Latency: long prompts = slow responses. The user waits for the comment at the end of every match; can’t take 4 seconds.

- Privacy: the less detail travels to the model, the better.

Instead, at the end of each match (in background, with no user wait) I run a tiny prompt asking:

“Generate a ONE-sentence summary, max 200 chars, of what happened in this match from the rival’s POV.”

Sample output: "Beat them 6-2 in classic cinema; missed two Hitchcock questions in a row."

That’s what I persist in last_5_summaries. The real history (questions, answers, times) stays local on device for Smart Review.

3. Pre/post-match comment

When a rival comment is needed, the prompt goes with:

- The personality (

Taunter,Sage, etc.) + 2-3 sample tone lines. - The

last_5_summaries. - Summarized

head_to_head. - The 2-3 most relevant topics for this match.

- (If post-match) what happened in this specific match.

Total size: ~500-800 tokens. Model: Gemini 2.5 Flash Lite (enough for this use, latency ≈ 800ms).

With temperature tuned to 0.7-0.8 and a strict-ish system instruction, outputs are consistent with the rival’s personality and almost never fabricate data not in context.

4. Defensive cache

If the user opens the main menu 3 times in 5 minutes, I don’t call Gemini 3 times. The first call caches locally and reuses for 10 minutes. If the user plays a match in that interval, I invalidate the cache and re-call (because there’s a new match in history now).

What it does NOT do

Things I explicitly chose not to implement:

- Free chat with the rival. Your rival isn’t a chatbot. They only appear pre/post-match with generated lines. If I opened it to free chat, it would become an assistant, not a rival.

- Infinite memory. I only store the last 5 matches. If you won 3 months ago with a perfect score, the rival doesn’t remember. Neither does the rival nor you: just like life.

- Cross-rival memory. If you get assigned a new rival, it starts from zero. Each rival has its own memory with its own player.

What I learned

- Don’t ask the model what code can compute. “How many times have I beaten them” is a counter, not an AI call. Only invoke the model for what requires natural language.

- Condensed summaries > full history. Once you accept you’re not going to store everything, the whole system simplifies.

- Personality is prompt + sample tone, not fine-tuning. For 5 personalities, fine-tuning isn’t worth it. A good system prompt with 3-4 examples is enough.

- Defensive cache is mandatory. Otherwise model calls blow up for nothing.

If you like this kind of technical detail and want to see Mentium in action, you can download it for free on Google Play: Mentium 1.0 “Curie” (early access).

— Valentín

devlog ai gemini technical